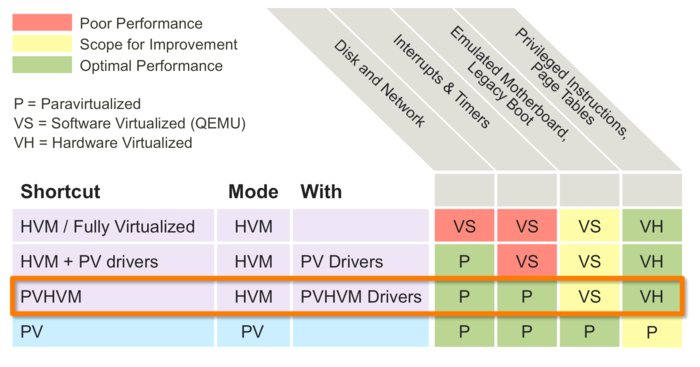

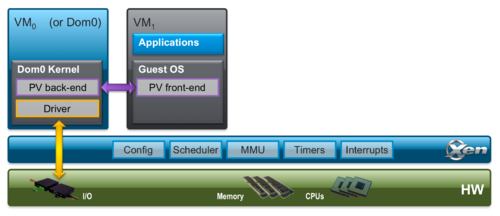

HVM with PV drivers: HVM extensions often offer instructions to support direct calls by a PV driver into the hypervisor, providing performance benefits of paravirtualized I/O; PVHVM: HVM with PVHVM drivers. PVHVM drivers are optimized PV drivers for HVM environments that bypass the emulation for disk and network IO. They additionally make use. Any other organization is also free to do the same by registering a top level PV device with the Xen Project community (see Xen PCI device ID registry) and logo-signing their driver builds. The Win PV Drivers are built by a Jenkins server when new patches are pushed into the repo (Development Builds) and can be found here. To install a driver. James, Sorry if I missed it earlier, but on version 0.9.2 you mentioned a bug that would essentially lock up the dom0 system for 5 or so minutes if a Windows bug check occurred on a system running the GPL PV drivers.

Xenvbd is the driver that interfaces between the Windows scsiport miniport driver and the Linux blockback driver. Almost all of the Windows scsiport code runs at a very high IRQL, and there is a long list of things that cannot be done at a high IRQL. With the launch of new Xen project pages the main PV driver page on www.xenproject.org keeps a lot of the more current information regarding the paravirtualization drivers. Supported Xen versions Gplpv =0.11.0.213 were tested for a long time on Xen 4.0.x and are working, should also be working on Xen 4.1.

| Needs Review Important page: This page is probably out-of-date and needs to be reviewed and corrected! |

About

xenvbd is the driver that interfaces between the Windows scsiport miniport driver and the Linux blockback driver.

scsiport

Almost all of the Windows scsiport code runs at a very high IRQL, and there is a long list of things that cannot be done at a high IRQL. The main things are spinlocks, memory allocation, and waiting for events. scsiport makes sure that all the code is properly synchronised so spinlocks aren't a problem, and xenpci takes care of all the xenbus stuff so waiting for events isn't a problem. Not being able to allocate memory is a huge pain though.

Download Xen Gpl Pv Driver Developers Motherboards Driver Download

x32 vs x64

Because of some alignment issues, the block front/back ring structure is different between 32 and 64 bit environments. This is a problem if Dom0 is one and DomU is the other. Later versions of xen (3.2+ I think) take care of this by publishing the abi used in xenbus and adjusting accordingly. My Dom0 is Debian which at the time or writing has blockback code which predates this. To get around this we put a few requests on the ring and see what they look like when they come back. If our alignment is wrong we switch ring configurations on the fly. It's messy, but it works.

Download Xen Gpl Pv Driver Developers Motherboards Driver Download

x32 vs x64

Because of some alignment issues, the block front/back ring structure is different between 32 and 64 bit environments. This is a problem if Dom0 is one and DomU is the other. Later versions of xen (3.2+ I think) take care of this by publishing the abi used in xenbus and adjusting accordingly. My Dom0 is Debian which at the time or writing has blockback code which predates this. To get around this we put a few requests on the ring and see what they look like when they come back. If our alignment is wrong we switch ring configurations on the fly. It's messy, but it works.

Unaligned buffers

xenvbd only allows buffers aligned on a 512 byte (sector size) boundary. Windows doesn't have this limitation, so will hand xenvbd buffers on almost any alignment, but only sometimes. Almost all of the time the buffers are 512 bytes in size, rarely they are up to 4096 bytes in size, very rarely they are up to 8192 bytes in size, and even more rarely they are more than that (I've only seen it when Windows does a chkdsk on boot).

Unfortunately we can't allocate bounce buffers on the fly, so to get around this we tell windows we want a per-SRB (Windows SCSI request structure) buffer of 4096 bytes, and pass that to blockback, and copy the data to the buffer (on write) or from the buffer (on read). If windows wants to transfer more than that we have to go through the SRB multiple times. This is slower, but should happen rarely enough that performance it isn't a problem.